Posts filed under ‘Projects’

Student Satellite Madness – VSSC ISRO India Trend

The most difficult and expensive phase is sending the satellite into the ether and even more important is what you do with it after because otherwise, it is just space junk. I rest my case. Let me be and ponder why that chicken is crossing the road.

Rise of External Brain – Prosthetic Memory – Chaos

Where were we? Ah yes, my research. That ship has sailed but the light is being carried by Jim Gemmell and Gordon Brown. They recently brought out a book called “Total Recall” that has come out and their blog has some wonderful pointers of how we are on the path to create digital surrogates on the web already. Our bookmarks, history, thoughts, expertise, appointments, events, friends, bits, interests, locations, places, reminders, TV shows, artifacts like photos are all being archived/available on the web and with the right aggregator and linking services, one can pull together a fairly accurate digital version of oneself. Irrespective of all this progress, from my early days of internet access and even today, I am aware of the vastness of the WWW which overwhelms and underwhelms me at the same time because the web is really large and massive and gives me exposure to many brilliant people and ideas. Like the narrator emphasized in the “Hitchhikers Guide To Galaxy”, it is just unbelievably vast, huge and mind-bogglingly big. Whenever I go online, I feel like my neurons are connecting to the collective sentient consciousness of an entire species (well, those who have connectivity) residing on a little blue rock…

December 14, 2009: From the early days of computers, people have speculated that computers would be used to supplement our intelligence. Extended stores of knowledge, memories once forgotten, computational feats, and expert advice would all be at our fingertips. In the last decades, most of the work toward this dream has been in the form of trying to build artificial intelligence. By carefully encoding expert knowledge into a refined and well-pruned database, researchers strove to build a reliable assistant to help with tasks. Sadly, this effort was always thwarted by the complexity of the system and environment, too many variables and uncertainty for any small team to fully anticipate. (cue: ode to Vannevar Bush and “Memex”)

Success now is coming from an entirely unexpected source, the chaos of internet. Google (and smart search engines of tomorrow) has become our external brain, sifting through the extended stores of knowledge offered by multitudes, helping us remember what we once found, and locating advice from people who have been where we now go. For example, the other day, I was trying to describe to someone how mitochondria oddly have a separate genome, but could not recall the details. A search for [mitochondria] yielded a Wikipedia page that refreshed my memory. Later, I was wondering if train or flying between Venice and Rome was a better choice; advice arrived immediately on a search for [train flying venice rome]. Recently, I had forgotten the background of a colleague, restored again with a quick search on her name. Hundreds of times, I access le external brain, supplementing what is lost or incomplete in my own. This external brain is not programmed with knowledge, at least not in the sense we expected. There is no system of rules, no encoding of experts, no logical reasoning. There precious little understanding of information, at least not in the search itself. There is knowledge in the many voices that make up the data on the Web, but no synthesis of those voices.

Perhaps we should have expected this. Our brains, after all, are a controlled storm of competing patterns and signals, a mishmash of evolutionary agglomeration that is barely functional and easily fooled. From this chaos can come brilliance, but also superstition, illusion, and psychosis. While early studies of the brain envisioned it as a disciplined and orderly structure, deeper investigation has proved otherwise. And so it is fitting that the biggest progress on building an external brain also comes from chaos. Search engines pick out the gems in a democratic sea of competing signals, helping us find brilliance. Occasionally, our external brain leads us astray, as does our internal brain, but therein lies both the risk and beauty of building a brain on disorder. I have seen/played with future and it is not classical AI.

Real-time Search – Query People – Hybridosphere

To illustrate it more clearly, let us have a peek into what Collecta (a self-proclaimed ‘real-time search engine’) is doing. It primarily scours for blogs, tweets, comments and media from the social media landscape and exposes a simple search box on top of the index with scrolling results on every tick of time. While Collecta still does not crawl other activity streams like Delicious, Evernote, Hooeey, Digg etc. (due to lack of API or traction or both), very quickly, I was inundated by opinions of people talking about something that I was interested in knowing although it is a very simple keyword play using data stream algorithms is what I thought was happening in the background. In other words, I was firing a query and figuratively, getting people and their noisy thoughts, not documents per-se, as results. I was pretty amused (even UI is cute) but not impressed. Quite. There should be a semantic-people-web out there[2]. As for object-centric web (or 3.0 or 4.0) of the future, someone has to invent it but there are some chains of thought of what will searching on that web look like. I have some ideas too (hey, they’re free) but let us not get ahead of ourselves and stick to the real-time search topic and come back to the Battelle article, shall we? Till then, here are some choice quotes from article(s) and available commentary. It is at best incomplete because, a lot of debate must have ensued, ideas spawned, hundreds of blog posts (and tweets and comments) written yada yada yada in the downstream but we will never know about ALL even if citations and trackbacks are supposed to be transitive. If B mentions A and C mentions B, then A should carry over to C (or the commentary should attach to A). This is what I want to work on using the a multitude of available APIs (ThoughtReactions seems to be a good name for such an endeavour, no?) but that is a project for the proverbial another day which never ever seems to dawn. Am rattling again. Out with the commentary…

Google was the ultimate interface for stuff that had already been said – a while ago. When you queried Google, you got the popular wisdom – but only after it was uttered, edited into HTML format, published on the web, and then crawled and stored by Google’s technology. It’s inarguable that the web is shifting into a new time axis. Blogging was the first real indication of this. Technorati tried to be the search engine for the “live web” but failed[1]. Twitter can succeed because it is quickly gaining critical mass as a conversation hub. But there is ambient data more broadly, in particular as described by John Markoff’s article (posted here). All of us are creating fountains of ambient data, from our phones, our web surfing, our offline purchasing, our interactions with tollbooths, you name it. Combine that ambient data (the imprint we leave on the digital world from our actions) with declarative data (what we proactively say we are doing right now) and you’ve got a major, delicious, wonderful, massive search problem, er, opportunity.

Let’s say you are in the market to buy something – anything. You get a list of top pages for “Canon EOS”, and you are off on a major research project. Imagine a service that feels just like Google, but instead of gathering static-web results, it gathers live-web results – what people are saying, right now about “Canon EOS”? And/or, you could post your query to that engine, and you could get real-time results that were created – by other humans – directly in response to you? Add in your social graph (what your friends, and your friend’s friends are saying), far more sophisticated algorithms a critical mass of data – and those results could be truly game changing. OneRiot just launched and I believe we’re taking a piece of the problem by finding the pulse of the web. The content people are talking about today by having over 2 million people share their activity data processing it in real-time and create the first real-time index. The web as it is today, now, tackling news first followed by videos and products next. And therefore, each pulse.

How much journalism these days is spotting patterns from the real-time web? How much is mining the static web? There is another form of journalism, which involves spending time in the real world, but it may be falling out of fashion. I’m not sure that there’s a huge great wobbly lump of wondermoney sitting at the end of the real-time web search rainbow. And if there is, I wonder if it’s much bigger than the one sitting a day further down the line, where the massive outpouring of us auto-digitising hominids has been filtered by the mechanisms we have, more or less, in place now. Google’s big problem isn’t that it can’t be Google a day earlier, it’s that it can’t be cleverer about imparting meaning to what it filters. For now, and until AI gets a lot better, the new worth of the Web is how we humans organise, rank and connect it. The good stuff takes time and thought, and so far nobody’s built an XML-compliant thought accelerator – Rupert Goodwins

Do you think live feeds be treated similar to how newswires were 30 years ago – considered a pay-for service? You’ve described how Twitter could start making money (via its search) and made me think of the possibility of Google buying Twitter. How different from Twitter should Google’s indexing of Twitter be? Their blog search is dismal because they’re searching good with junk. Look at those Twitter results, I am wondering exactly what utility they actually bring? I mean what value to the user? To be frank I care less what my friends think about the Canon EOS than what the opinions of professional photographers. In that regard there need to some method for improving authority. My social graph is my social graph – it’s of dubious value to me for making buying decisions. All the same, great post as it continues to generate lots of discussion in our office. The point you raise about what this feels like to users is especially near to me – it’s one thing to bring back real time results, and another thing entirely to present them in dynamic, useful ways.

I’m not all that concerned about what twit Twittered what in the last 24 hours, and I think that most of the people that do are twits. For instance, if I was researching a camera or a car, I’d be interested in the best stuff written about it in the last year or so, not in the last five minutes. Sure, a public relations flack might want to keep track of bad things people say on Twitter so they can have their lawyers send them nastygrams, but for ordinary people, it’s just a waste of time. Entertaining maybe, but a waste of time. Right on. It is not just real-time search, there’s a lot more that can cash in on this (and provide great user experience in the process). There will also be a goodly sum of what Rupert calls “wondermoney” racing at lightspeed toward the bank account of the company that will best provide the means to protect privacy of hundreds of millions who have absolutely no need nor any desire to see the dots of their every action and comment connected and delivered to “the matrix”.

This is definitely the next big thing in search. Your articulation of it is perfect. I say this, because I experienced this same thing over the last several weeks when I created a new Twitter account for our new products and wanted to track what people are saying. A quick Twitter search was the answer and a few replies later I had some conversations going and new followers as well. The real-time web will far outweigh the benefits of the archived web, atleast for certain types of information. Journalism was the original search engine, albeit with a rather baroque query interface. It tends to adopt most efficient use of people and technology to produce good data, being a notoriously Darwinist entity, and it’s quite good at adapting quickly – hasn’t taken long for blogs to make their mark. It’s a good thing to track if you want to sniff out utility on the Web – after all, journalism is the first draft of history.

Marketers would love that ambient data but that is a backwards approach to search. I don’t see the usefulness or appetite for people to query about what their friends are doing – especially when its already being delivered to them. You really need to see what’s going on in FriendFeed more to grok the real time nature of the web. Look at my realtime feed here for just a small taste – that’s 4,800 hand-picked people being displayed in real-time here. So, I think evolution is the wrong word. Perhaps the right word is “rediscovery”, or “mass public revelation” or “adoption” or something like that. The future was here 15 to 50 years ago. It just wasn’t (to quote the popular phrase) evenly distributed. So maybe all you’re saying is that this particular aspect of search, i.e. routing and filtering, or SDI, or whatever we may call it, is finally “growing” or “spreading”. But “growing” != “evolving”. But search is not evolving; what you are speaking of already exists and has existed.

We are talking about “text filtering” which sounds exactly like an idea that has been around for 40+ years. Here is a description of the problem from http://trec.nist.gov/pubs/trec11/papers/OVER.FILTERING.pdf – a text filtering system sifts through a stream of incoming information to find documents relevant to a set of user needs represented by profiles. Unlike the traditional search query, user profiles are persistent, and tend to reflect a long term information need. With user feedback, the system can learn a better profile, and improve its performance over time. The TREC filtering track tries to simulate on-line time-critical text filtering applications, where the value of a document decays rapidly with time. This means that potentially relevant documents must be presented immediately to the user. There is no time to accumulate and rank a set of documents. Evaluation is based only on the quality of the retrieved set. Filtering differs from search in that documents arrive sequentially over time. This overview paper was from 2002, but the TREC track itself goes back to the 90s and the idea goes back even further. In fact, now that I think of it, I remember talking with a friend at Radio Free Europe (anyone else remember that?) in Prague back in 1995, and he was describing a newswire system that they had, that did this online, real-time filtering. So maybe there’s a shift from static to real-time search in the public, consumer web. But there have been systems (and research) around in other circles that have been doing this for a while.

You may note that the link refers to a machine called ‘Memex’, Vannevar Bush (one of the first visionaries of “automated” information storage and retrieval schemes) wrote about decades before Luhn wrote about SDI. But you could go back a couple millenia, too – for example: the ancient Greeks argued whether words were real or ideal, representations or hoaxes for “actual observation” (and such disputation persisted throughout the Middle Ages [Occam’s Razor] to this very day [one of most renowned philosophers of 20th Century – Ludwig Wittgenstein – probably immensely influenced the AI community without their even being “aware” of it). The issue that such “gizmos” such as SDI and/or AI in general cannot deal with is that the world keeps changing: change is the only constant. Everything is in flux – always! As it always has been, no? The ideas and technology for all search were around way before Alta Vista popularized them, and Google.

[1] Technorati is a cautionary tale but then, most blog search engines (Technorati, Icerocket, Tailrank) have not made an impact because value of pure play search is in doubt. No one wants to go to a search box when there are the triumvirate of Google, Wikipedia and Browser Search Bar. Even Google is neglecting the area (cue: Google Blog Search sucks). Sad really because I feel that blogs empowered the first and therefore, the impressionable pioneering wave of citizen journalism and democratization of media phenomenon (Podcasts, YouTube, Seesmic, Qik etc. followed) that is a promising and enticing field which got washed away while still raw by Twitter (which can still be seen as lazy blogging if one is really looking hard) and the search companies the statusosphere spawned (OneRiot, Topsy, Collecta, Scoopler, TweetMeme). Maybe it is the ‘path of least resistance’ or ‘journalism is not for everybody’ at play here or just that something might be missing like say, attention data that can today be sucked from various places (eg. “implicit web”). Some blogosphere companies still exist and have survived, nay, thrived because they were smart to change their technology, business and operational model like Sphere (where I worked) and another promising company, Twingly (working on ideas such as ‘Channels’ and integrating with rest of mainstream Web 2.0). Am not a betting person but if life depended on it, I predict a revenge of the hybridosphere (blogs plus history, status and trails) when the Twitter fad cools down as well, just another phase of tripe (Facebook has 40 times more updates). We are already seeing it because Twitter is becoming yet another ego-URL store and copy-cat social network where it is becoming increasingly difficult to seperate the genuine article from the millions of pretenders, spammers and worst, marketeers.

[2] Between extremes of organized mainstream professional media to unstructured freestyle frivolous noise of jibber-jabber, there is a small, yet significant band of people-centric web which offers a truly multi-opinionated clairvoyance to the world. An analogy is ye faithful human eye which can only see a very small portion of the electromagnetic spectrum. Sure, it would be nice to be able to see the ultraviolent and infrared frequencies but the most interesting things happen in the visible band because it is so colourful and vibrant. There has to be an evolutionary benefit that the eye has settled to its current state. Getting out of the metaphor, this narrow band of semi-professional passionate implicit-explicit human generated content (you call it ‘hybridosphere’ if you like), if captured and processed intelligently, can be made to do some very magical and wonderful things (search, direct and indirect such as ‘related articles’, is just one of the many applications that can be built on top of this foundation and as proof look at crowd powered news site Insttant and sentiment analysis companies like Clara and Infegy) to all stakeholders but most of all, to the general public who just want to see the web as a collective of nice people living harmoniously in a wee global village free from shackles of big media opening up a world of discovery from all parts of our little blue marble in the sky. It is a matter of time and effort (luck is to work on RSSCloud, ThoughtReactions, Histosphere[3] and other neologisms) when we will see such Webfountain’ish hybrid companies (data mix of blogs, status, history, conversation, bookmarks, attention, trails, media, objects etc.) claiming their rightful place in Web 2.0 (or 3.0 or 4.0) pecking order, bringing to the fore badly needed innovation to excavate the people-centric web diamond mine. In my vision, searching in such a world looks figuratively like this…

This is inspired by a scene in “The Time Machine” (2002) where the protagonist Alexander encounters the Vox System in the early 21st century. The virtual assistant (played by Orlando Jones), is seen on a series of glass fibre screens offering to help the hero using a “photonic memory core” linked to every database in the world effectively making it into a compendium of all human knowledge. Since this scene must have been thoroughly researched, it is safe to rip it and suffice to say that an immersive search experience is one where the searcher is virtually forwarded to experts in the area who might have the answer he/she seeks. [edit: 20091214] Apparently, such a thing has been pondered before. Obvious really. It is Battelle again writing for BingTweets Blog, “Decisions are Never Easy – So Far. Part-3” –

Normally a 30 minute conversation is a whole lot better for any kind of complex question. What is it about a conversation? Why can we, in 30 minutes or less, boil down what otherwise might be a multi-day quest into an answer that addresses nearly all our concerns? And what might that process teach us about what the Web lacks today and might bring us tomorrow? The answer is at once simple and maddenly complex. Our ability to communicate using language is the result of millions of years of physical and cultural evolution, capped off by 15-25 years of personal childhood and early adult experience. But it comes so naturally, we forget how extraordinary this simple act really is. I once asked Larry Page of Google, what his dream search engine looked like. His answer: Computer from Star Trek – an omnipresent, all knowing machine with which you could converse. We’re a long way from that – and when we do get there, we’re bound to arrive a with a fair amount of trepidation – after all, every major summer blockbuster seems to burst with the bad narrative of machines that out-think humans (Terminator, Battlestar Galactica, 2001 Space Odyssey, Matrix, I Robot… you get the picture).

Allow me to wax a bit philosophical. While the search and Internet industry focus almost exclusively on leveraging technology to get to better answers, we might take another approach. Perhaps instead of scaling machines to the point of where they can have a “human” conversation with us (a la Turing), perhaps instead (or, as well), we might leverage machines to help connect us to just the right human with whom we might have that conversation? Let me go back to my classic car question to explain – and this will take something of a leap of faith, in that it will require we, as a collective culture, adapt to the web platform as a place where we’re perfectly comfortable having conversations with complete strangers. Imagine I have at my fingertips a service, that allows me to ask a question about which classic car to buy and how, and that engine instantly connects me to an expert – or a range of experts that can be filtered by critieria I and others can choose (collective intelligence and feedback loops are integrated, naturally). Imagine Mahalo crossed with Aardvark and Squidoo, at Google and Facebook scale.

An ‘expert’ of course is still undefined and the jury is still out on what such an entity constitutes. Hey! I never said I have all the answers. Besides, aren’t things like call centres, web site with live chat etc. already handle this rant of human-on-line? and communication is always a problem. So, good luck with that. Live long and prosper.

[3] Let us talk about histosphere. The concept is fairly simple. There are several companies (Hooeey, Google, Infoaxe, Thumbstrips, WebMynd, Iterasi, Timelope, Cluztr, Wowd, Nebulus etc.) that are collecting the browsing history of users mainly through the mechanism of toolbars. On an individual basis, ‘web memory’ has utility and so, users can be convinced that it is a good tool to have and that it is a good idea to share the surf logs to the public at large not very unlike the case made for social bookmarking. This collective social history (also count Opera Mini logs whose web proxy server is collecting 500Million URLs per-day and Mozilla Weave which will have similar numbers soon) is what I call the ‘histosphere’ (a parallel word being the blogosphere and the criminally underexploited, bookmarkosphere). A simple theory is that the histosphere is a proper superset of blogosphere and bookmarkosphere and hence it is as useful, if not more so, than both combined. There is a trickle effect at play here. Not all history gets bookmarked and not all bookmarks get blogged. So, the narrow band we talked about above is really narrow but as any signal processing engineer would vouch, we should also count the haze or radiation to make sense of the quasar. Therefore, the same business and technology models of blogosphere (example, Sphere) and bookmarkosphere (example, Digg) can be replicated for the histosphere but given the noisy nature of surf logs, one should apply filters (like ‘engagement metrics’) and use properties of attention data (like ‘observer neutrality’) to deliver better experiences. Google is already trying to do this if one is logged in to get personalized search results but they suck in one-off rare cases they are visible. A use-case is to combine web memory with the side-effect of identity provided by toolbars to customize the whole web experience. Everywhere you go, the web memory follows sifting through the cacophony. For example, if I am using Infoaxe and go to NYT or WSJ, the publishers will detect that it is me@infoaxe and deliver relevant content (and also ads, sic). Whichever search engine (reference or blog or real-time) loads history (and other streams) onto its cart will no doubt upset the shifting gravy train. Go Hybridosphere!

Running Water – Word Play – Fools Paradise

[edit – 20091119] While I initially did this in jest, it never escaped my purview that “running water” is still a dream chased by over 90% of the worlds population. There is just not enough water (the future wars will be fought over water and all that) and plumbing and there are just too many people. Why, just today, Thomas L Freidman in his latest piece, “Americans Living in Fools Paradise” quips –

people in the developing world are very happy being poor – just give them a little running water and electricity and they’ll be fine, no worries at all for us

Just gives more weight to the pondering, ain’t it? I guess we just have to live with the knowledge that those of us (believe me, it was a fight to get it working in my house) who have running water are the chosen lucky buggers and could do with a little more modesty in complaining about our pampered and mundane lives. If you are statistics/story inclined, you might want to go see some numbers and realities on Red Button Design (disclaimer: co-founder of this company, James Brown, was a club-mate of mine at University of Glasgow) making acclaimed Reverse Osmosis Sanitation Systems (ROSS) based water purifiers aimed at BoP of the third world.

GE Edison Challenge – Renewable Energy for India

Raghotham in GE Edison Spotting reports on some of the student demonstrations at GE Edison Challenge 2009 in Bangalore (you cannot lure me to use the new name Reddy buggers). Here is a nice photo (of ‘Urjas’ or ‘Tech Innovas’) from IIT-Bombay (used B-word Thackerey morons) followed by clips from other finalists from IIT-Madras (get it?), SVCE, IIT-Kharagpur…

Raghotham in GE Edison Spotting reports on some of the student demonstrations at GE Edison Challenge 2009 in Bangalore (you cannot lure me to use the new name Reddy buggers). Here is a nice photo (of ‘Urjas’ or ‘Tech Innovas’) from IIT-Bombay (used B-word Thackerey morons) followed by clips from other finalists from IIT-Madras (get it?), SVCE, IIT-Kharagpur…

Srinath Ramakkrushnan and his IIT-Madras team who call themselves ‘Graminavitas’, are a lot more ambitious lot, proposing an integrated solution that spans rice de-husking in Natham, a 300-household village 60 km north of Chennai (with a de-husking machine he himself made after a two-year stay in Ujire in Karnataka) to building a micro-grid architecture that would partly use biogas produced from the husk to produce power to providing a workable public toilet system to improve rural sanitation to using the waste from the toilet to produce biogas to replace the need for LPG… phew.

Neha (chirpy 20-something Punjabi kudi in pink tees and blue jeans) and team from Sri Venkateswara College of Engineering are trying to produce electricity using local resources in a village in Tamil Nadu so they can have power supply round-the-clock, instead of just two hours a day. The ‘Energy Boosters’ chose Kaliyapettai village near Chennai, which has a textile mill nearby discharging industrial effluents. Neha and friends used the effluents as nutrients to grow algae on. Algae convert carbon dioxide absorbed from the atmosphere into lipids, which are then converted into biodiesel to generate electricity in a diesel generator. The team grew algae in a tank and have sent in the oil they produced for analysis of its power potential. Neha says the oil produced in 5 days can power lighting for the village’s 600 families through the day, for an initial cost of as little as Rs. 1 lakh (or 2000$).

Shashikant Burnwal, Arnab Chatterjee and Ashim Sardar of IIT-Kharagpur have built a pot-in-pot storage system that helps store vegetables and cooked food at temperatures as low as 8 to 10 degree Celsius, using nothing more than two earthen pots and a fan picked up from the insides of a desktop computer. Refrigeration, with minimal electricity necessitated by global warming. They have also designed a home cooling system in which sunlight falls on a PVC roof and heats it up, causing airflow between low pressure and high pressure areas, cooling homes – again, no electricity used.

Are these ideas, and those of the other 15 teams, practical, scaleable and worth the trouble? Well, the judges went around grilling the participants on the economics, the scientific principles and technology and the novelty of the ideas. GE and the Indian government’s Department of Science and Technology (through DSIR TEPP program) have already sweetened the deal. Each of the 18 finalist teams will take home Rs.20,000. In addition, GE will award the winning team, to be announced on Friday, Rs.5 lakh and a runner-up Rs. 1 lakh. And, to boot, the DST will consider funding their ideas so they can turn it into reality. While I feel that I have seen some if not all of these ideas during the days when there was only one TV channel in India (so the whole family watched just about everything from cheesy Mahabharatha to agricultural programs on biogas and mushroom farming), I suppose, there are some positives. Atleast it got some people thinking even if it is heavily incentivized.

Ads as Monetizers for Real-time Search – Puhleeze

As major events unfold, Twitter, Facebook and other similar services are increasingly becoming the nation’s virtual water coolers to become an instant record of Americans’ collective preoccupations. It’s no wonder, then, that pundits and investors are salivating over the prospect of an effective way to search this information. For all the buzz, however, one question remains unanswered: How easily can real-time search turn into real cash?

No one doubts that helping users find fresh, up-to-the-minute content on the Web is valuable. But plenty of other valuable Web services – including content sites, free Web e-mail and social networks – have struggled to find effective business models. “We have no idea how much you can make off of real-time search,” said Danny Sullivan, a veteran search industry analyst and editor of Search Engine Land, an industry blog.

Search advertising is probably the most effective form of marketing ever invented. Because search queries telegraph a users’ intent with precision, they make it possible to match people with the right ads at the right moment. If real-time search is ever to achieve the same kind of magic, it needs a large volume of queries and the same ability to match users’ intent with ads.

Real-time search entrepreneurs dispute this. Others say examples abound of queries that could be matched with ads: a search for tweets about snow conditions may be an advertising opportunity for ski resorts; one about poor cellphone coverage could attract ads from a rival network etc. Users are exposing their intent, and you have an opportunity to match it.

Google said that real-time search is valuable, though not necessarily because the queries will generate as much cash as regular searches. “We don’t know enough about what kinds of queries people would issue against real-time data to know how monetizable it is,” said Marissa Mayer. Google wants the Twitter data primarily because its mission is to be comprehensive: Google wants to organize all of the world’s information, including the Web’s fleeting real-time conversations to keep people searching on Google.

Interesting arguments, eh? But quite sad. First of all, real-time web is not just about Twitter (aka, what people are having for their breakfast) which is a post for another day. Secondly, monetization of any web service need not be shovelled into fitting ads (like, depending on the breakfast, show people ads related to butter and jam) which is yet another post. Thirdly, search (and banners) is not the holy grail of monetization. Data is THE currency. Finally, am on it. Patience my precious’.

FOAF as Identity – Proof by Ye Olde Adage

In both cases, it is pretty clear that FOAF information can be used as an identity. In fact, creating/uploading an FOAF file and using a corresponding URI pointing to it, or some pertinent information inside it, can be a full-pledged identity on the web ala OpenID with stuff like SSL useful for double verification and such. It is complicated. Dont ask. Just dig around. While doing so myself to understand this side-effect, I realized that of course, FOAF information can be used as identity and one does not have to be a geek or philosopher to understand how it is feasible. Truth is, this is ancient wisdom as encapsulated in the adage, “a person is known by the company of the friends he/she keeps”. An aha moment indeed. Slick, eh?

BoP Debate-Comment-Response # 02 – Just Blog

Right. Working part-time as a chief executive on a stealth free-market-based ICT education initiative has gifted me spare time to inquire into the BoP phenomenon, particularly, the Cornell + Solae activity in Warangal, India which is quite accessible to me distance-wise. So, the next few days are going to be very content heavy on the blog because I am going to put forth the many thoughts, notes and drawings I have collected over the years. I am just using the Hammond-Karnani-Prahalad debate on NextBillion and the Cornell World Forum thingy as starting points.

Please note that am thinking out loud. Some of what I say are bound to be stupid and ignorant. I make no apologies but please dont take anything personally. Feel free to speak your mind – not through the WordPress commenting system but through your blog posts only. I would appreciate it very much. Who knows? Maybe, we will work together at some point 🙂

Want to stress on the blogging part. I repeat, dont comment on the posts using the WordPress commenting system. Just blog. For me, the real comment is always the blogging part – which if you remember is what blogging was all about i.e. web log. If I see something, I should ‘comment’ through a bonafide blog post citing the link/content but not through the commenting system of the publishing system.

Using the inbuilt system is against the freedom of speech tenet (because comments go through a lame editorial cycle for godssake) and does no justice to the beautiful idea of pingbacks which was supposed to be the real fabric of the web after the hyperlink concept.

My bad for this lesson. I feel very strongly about ‘commenting’ systems which are totally redundant, broken and too difficult to maintain when there is an easier alternative. Or maybe, I am just a standard computer science geek.

BoP Debate-Comment-Response # 01 – Primer

“You argue that…”

Am not arguing. There is no point nor any end to that. I only put forth my opinion and now, some observations. Until, I furnish proper numbers, reference scholarship and make some actionable points, I am still armchair opinionating. Maybe random debating, but definitely not arguing (which according to a Monty Python skit is ‘a connected series of statements intended to establish a proposition’).

“Truly, in this world of shades of grey it is difficult to say whether some products are unambiguously good or bad…”

There are several exceptions to this. Some products, services and even, ideas can be unambiguously good or bad. Interesting that I tend to order ‘bad’ first before ‘good’ as a natural tendency. But yes, the jury is still out on BoP and the products/services that have been spun-off from this root.

“There are many examples which are, although not 100% good for every intervening market agent, still very worthy of pursuing…”

Definitely agree. Trials have to happen. The play should go on. Learn from mistakes. Mumbo jumbo. But it has to be asked. What is the tradeoff? Who is doing the cost-benefit analysis? Who is keeping count?

“Mobile phone telephony: A favorite of mine…”

Ah yes. We are getting somewhere. Mobile phones, eh? Seems to be everybody’s favourite and a media darling these days. Cliches aside, this deserves its own post. Coming up next.

Unified Health Policy Needed in India

* Thirty years ago, India could get away with not providing quality sanitation to its people and not being able to eradicate mosquitoes that carried a variety of diseases. It could claim it was too poor.

* Today, India says it is an emerging economic power, quotes robust GDP figures even in an otherwise grim global scenario and glories in sending missions to the moon. Its public health record can no longer be defended.

* Yet, there is something morally disquieting (disgusting) about a system that makes vaccines for the world but cannot deliver them to its own children; and about a gleaming healthcare infrastructure that shuts out hundreds of millions of Indians because they can’t pay the bills.

Ashok then goes on to describe two schemes – ‘Chiranjeevi Yojana’ in Gujarat and ‘Rajiv Arogyasri’ in Andhra Pradesh which should be read on the idiot screen. But it makes the economist in me wonder – is it because of this onus on health improvement that make Gujarat and Andhra Pradesh two of the most progressive states in the country or vice-versa?

Between Chiranjeevi and Arogyasri, India has two templates that can potentially revolutionize its public health. But these have to be nationalized to achieve universality and economies of scale.

Anycase, I should be doing a one-sheet illustration on the state of health in India one of these days (fingers crossed) although eSanjeevi Storyboard does touch upon telemedicine and 1-rupee-a-day health insurance with biometric card…

500 Rupee (10$) Indian Laptop Coverage

First exposure had been a couple of years back as per this news piece from Information Week. Some hunting on infoDev news archives for low ICT devices and calls to HRD, VIT, IISc and IITM are in order which lead to a 2006 OLPC news item ridiculing the sheer audacity of the effort while the comments have some URLs which are interesting.

Moving along, here is the PCWorld article of 29 July 2008 at 6:30pm which reported on India developing 10$ laptop. Here is the PC World retraction at 11:00pm the same day saying that the minister of state for higher education, D. Purandeswari, apparently missed a zero – bringing the laptop to a sane 100$. For emphasis, here is the press information bureau transcript that corrects the blunder.

Coming to the present, for starters, here is the press release from National Knowledge Commission on the launch of the National Mission on Education through ICT. Ironically, there is no website yet and the Sakshat ‘One Stop Learning Stop’ portal is down. Interestingly, it makes NO mention of the laptop which makes one question where did all this come from?

Anyhoo… the press release mentions several other missions/schemes such as the National Translation Mission, Vocational Education Mission, National Knowledge Network, Scheme of ICT @ Schools etc. and stresses that synergies will be sought and duplication averted.

On Feb 1st, the Better India RSS feed was the re-connector. The article is here, prompting me to check the calendar that it was indeed February and not April. The news source is Times of India.

Atanu Dey attacked the news by blaming the press for not applying simple common-sense. Apparently, media buys anything nowadays. His article is here and his source is here.

Amulya Gopalakrishan of the Indian Express burns the midnight oil and reports here. She starts out by being cynical but takes a U-turn of sorts in the end. It has some OLPC background too.

The news has spread appreciably. Some techie posts are up here and here. The news has even reached far flung places like Italy, Chile and Hungary. Whats more? it has been translated. Human, this is catching on! One wonders how much media will alight in Tirupathi on 3 Feb 2009.

All said and done, it could well be that the ‘laptop’ is really an access device ala the ones offered by Novatium/Nivio/Nimbus albeit with a display. There are some hints in the Engadget feed and PCWorld article for the same. Nimbus officially will give the device puppy to anyone for 50$. Novatium NetPC with BSNL offer is in the same ball-park figure but it is more like a mobile business model. Nivio have a companion device but also have something in stealth.

Or maybe, as Post Chronicle guesses, the thing that will be unvieled on Feb 3 is only a prototype and will actually be available in the market in 2010 by which time OLPC XoXo will be in the 75$ range. With company overheads paid for in rupees (if at all), unlike dollars for the swanky OLPC outfit with thousands of dollars per month devoted to Negroponte globe-trotting, it might be that the Indian claim is not outlandish after all. But I digress. I have seen Chinese mobile phones with 3-inch touchscreens in Delhi bazaars peddling for 15$ but they could be stolen maal. The 20$ price-tag for a mobile-platform based device could not be impossible (with some heavy subsidies and election promiseering, of course). However, 2GB RAM, WiFi and other specs seem circumspect and can be red herrings.

I can say this with some speck of authority because, at MCe2, we are planning on what we call an ‘education access terminal’ which we hope to deliver at a 75$ price-point. Note: It is price, not cost.

Arty Ode to WWW

I feel like my neurons are connecting to the collective sentient consciousness of an entire species (well, those who have connectivity) residing on a little blue rock…

Toiling Indian Einsteins – 7 Nov 2008

[edit: 20090212] Apparently, Stephen Jay Gould had said something similar in the past, “I am somehow less interested in the weight and convolutions of Einstein’s brain than in the near certainty that people of equal talent have lived and died in cotton fields and sweatshops.” [via A Bit of Piano Music by 6 year old Ethan Bortnick]

OLPC Rural ICT Conundrum – Energy

SIMLeach – Xinthe Headstart

MultiXP – Miragic Future

MultiXP – Client-side FFrogs

Greasemonkey – What Is?

Greasemonkey by itself does none of these things. In fact, after you install it, you won’t notice any change at all… until you start installing what are called “user scripts“. A user script is just a chunk of Javascript code, with some additional information that tells Greasemonkey where and when it should be run. Each user script can target a specific page, a specific site, or a group of sites. A user script can do anything you can do in Javascript. In fact, it can do even more than that, because Greasemonkey provides special functions that are only available to user scripts.

There is a Greasemonkey script repository that contains hundreds of user scripts that people have written to scratch their own personal itches. Once you write your own user script, you can add it to the repository if you think others might find it useful. Or you can keep it to yourself, content in the knowledge that you’ve made your own browsing experience is a little better.

There is also a Greasemonkey mailing list, where you can ask questions, announce user scripts, and discuss ideas for new features. The Greasemonkey developers frequent the list; they may even answer your question!

Why this book?

Dive Into Greasemonkey grew out of discussions on the Greasemonkey mailing list, and out of my own experience in writing user scripts. After only a week on the list, I was already seeing questions from new users that had already been answered. With only a few user scripts under my belt, I was already seeing common patterns, chunks of reusable code that solved specific problems that cropped up again and again. I set out to document the most useful patterns, explain my own coding decisions, and learn as much as I could in the process.

This book would not be half as good as it is today without the help of the Greasemonkey developers, Aaron Boodman and Jeremy Dunck, and the rest of the people who provided invaluable feedback on my early drafts. Thank you all.

Hello to TCJJ!

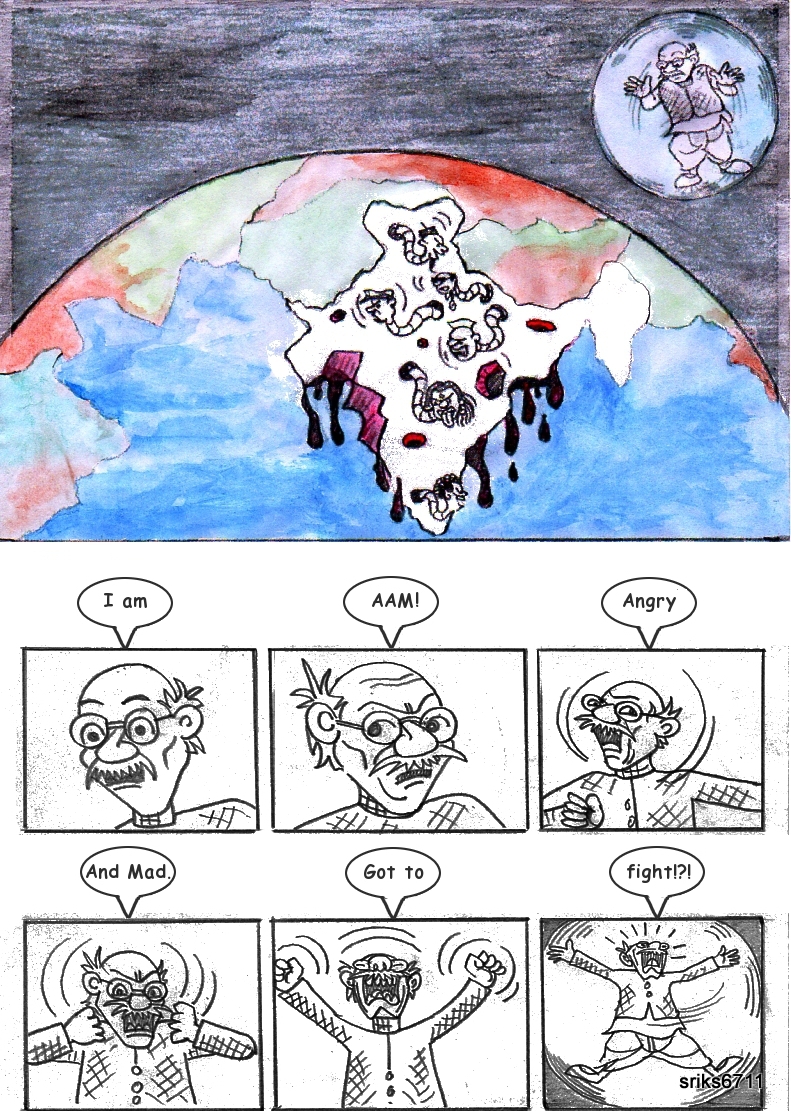

“The Citizens Journalism Journal” (TCJJ) is an open journal in which, we (the citizens) will contribute cartoons/articles on the topic of “citizen journalism” (CJ) phenomenon from a social, economic, research and worldly viewpoint and/or standpoint.

For starters, we will focus on a commentary of photo-journalism, picture-desks and similar. The journal will also seek to be a nice repository of links and research by/for/to the CJ community.

Specifically, TCJJ will have cartoons/articles on recurring themes including but not limited to –

“CJ Readers Digest”

“The CJ Effect”

“Beware of CJs” etc.

which will all be made clear as per the nature of the material that will be eventually published. We also solicit serious/academic matter.

TCJJ will have everything from subtle tips to blatant propaganda of the power wielded by CJ all done in a fun, delightful but yet thought-provoking way.

As an aside, TCJJ is a space to explore various other things of an artistic kind and display the work/portfolio of C-Works (a cartoonizizing collabarative community open for syndication) and so also to give wheels to Srikant’s (a PhD student and a primary author/editor) personal research on CJ.

To that effect, the support of Kyle (founder of Scoopt), Nick (the collaborating artist) and Ali (the collaborating researcher) who will all be primary authors/editors are dutifully acknowledged and respected. Without them and their help, this blog would never have existed.

Ideas for TCCJ

Here are some ideas that should one day eventually make it to TCCJ in some capacity and in one form or the other.

[describe the ideas here with permission from K, A and N]

If anyone wants to pursue on any of these, feel free but please let us know to avoid duplication.

SIE Freshers Fair Idea

We are trying to come up with a marketing plan to recruit some freshers into the society. Let us see… While we are on the topic of marketing –

Who are the target population we wish to attract?

Not all students are enterprising (atleast at 18 years). But there are always some who are. And if we look carefully at this species, we will always find that this specimen is competitive, smart (arguable), attention seeking etc. Nothing wrong with that.

I think a spirit of competitiveness is what is required. And this should be fun as well. Tell them subtly that this is what SIE is all about.

Something like a caption completion is creative, fun and competitive.

We should also put people in the spotlight while it is identifiable. Hmmm… an awards ceremony of sorts could do (with free food of course). Give them a taste of fame. With this as a vehicle, we could now entice them all together in a room and have them for a hour or two. Heckling in Freshers Fair is a bad idea since there is a lot of noise out there. We should distinguish ourselves.

“Wanna be popular?” – that could be a cheesy punchline. We could promise to put their photos or caption in the next newsletter. And we could make some posters leading people to our stall. “To be famous and popular, follow this path”. There should always be a profit motive. That goes without saying. This need not be money. But it will help.

Heck, if we have the time and in the mood for fun, we can make some photoshoots and posters ourselves.

Have to post it to all and get a team since I am decapacitated to work on ideas alone. I can only work in teams (or rather, make teams work). Let us see how things go on.

Success of Telecentres in Brazil – Deeshaa

[via] David A. Wheeler Travelogue. 6th International Free Software Conference (FISL 6.0) in Porto Alegre, Brazil.

The combination of a government changing its own internal structure to widespread use of OSS/FS, plus telecenters that use OSS/FS, mean that there’s a vast number of people who are already familiar with OSS/FS, and comfortable using it. Very interesting indeed. And I was very glad to hear the stories about people who started from nothing, were given a small starting help, and are now on their feet and helping others.

But those only describe the environment — not what happened. Part of the answer seems to be in their experience with ‘telecenters’ as a way to help the poor. The cities of Porto Alegre and Sao Paolo separately decided to start ‘telecenter’ programs to provide computer access to the poor (though the cities did consult with each other as each learned lessons). There were definitely problems at first; according to some, early efforts just plopped a center in an area and left the community to figure out what to do (which often led to disappointing results).

But after some teething pains, they’ve had remarkable successes. In Sao Paulo, they have about 200 centers, each with 20 computers, all running OSS/FS (using the Linux Terminal Server project results, driving the costs way down); one person estimates these 200 centers reach 700,000 people. Kids can only use them if they do well in school (encouraging school participation), and they’re really community centers where there are rooms to learn dancing, reading and writing, and so on. Adults can use the centers to print their business cards, send resumes, and so on, so that they can begin their own businesses or get a job.

They’ve had more success by first working with the communities to find out what they need, and then specializing the center to that community. I heard lots of great stories; for example, in one poverty-striken inner-city community, its leaders noted that they had few resources, but the local kids loved to make music in the street. So their center specialized and became a music recording studio (all using OSS/FS, best as I understand it). They’ve already produced a CD (through a lot of people working together), with more on the way. This not only brings in money, but possibly even more importantly, there’s a sense of pride in that community that was not there before. The point of these centers is simply to help people help themselves. The telecenters have to be managed and self-supported by the community; it’s not a continuous giveaway. And they’re built using an approach they call a “public-private partnership”.

In more recent Brazilian elections, a new Brazilian president was elected from the same party as the leaders in Sao Paulo and Porto Alegre. He thought these telecenters worked well, so now they’re spreading around Brazil.

Google Desktop as LAN Search

Decided to play with GoogleDesktop at the weekend and managed to put together a fully working app to act as a search engine for our LAN.

Heres how I did it:

1) As its already been talked about GD can index mapped drives with a little push. So I mapped all the network shares on our LAN and used “ReIndex-UPDATE-1.0.exe” avaliable from reindex to touch the drives.

This fully indexed all the shares on the network so thats the backend sorted. I also set up scheduling for “ReIndex-UPDATE-1.0.exe” so that it would stay up-to-date.

2) Secondly I tried using DNKA to share the search accross the LAN. This worked correctly however, the links to each file was through GD’s ‘redir’ program. This meant that if machine B was searching using machine A (where GD is running) and wanted a file from machine C then it would have to go B => A => C which adds a load to the network. So I wanted it to go B => C.

3) I installed Perl and Apache and wrote up an app to act as a fully functional front end to the GD. The code is available from here with the main feature of this code apart from displaying the SERs in a Google format was to change the URL’s so that GD’s ‘redir’ was not used.

Thinkers, Visionaries and Philosophers

- Great Thinkers and Visionaries

- Albert Einstein’s life with lots of photos.

- BluePete’s list of biographies

- Another list of biographies, organized by sciences.

- Proof of Fermat’s last theorem, a must read. Absolute passion for solving the problem is no match for any thing else.

- UG KrishnaMurti’s site, Ground breaking thinking. Kind of difficult to digest. Very good read, though.

- Philosophy Pages

- Exploring Creation/Evolution controversy, read the arguments against creationism.

- Evolution vs Creationism. a collection of links

- Is creationism Science?. Any scientist (including me) would immediately say – ofcourse, not ! But heck, people still pursue scientific creationsim.

- Atheism, Agnosticism etc.

Smart home dream could be for all

[via http://news.bbc.co.uk/go/pr/fr/-/2/hi/technology/4607747.stm]

Smart homes in which a single button controls lighting, heating, security, music, film – everything digital – has long been promised, but has never quite delivered.

This is partly down to technology that cannot talk to each other. It has also traditionally been a dream that only the uber-rich can make reality. But with rising broadband speeds and connections, the rise of wireless networks, and cheaper more powerful machines in homes, it is getting easier to realise the smart home dream. A lot more now can be controlled from one central machine, with one central and easy interface, which is what convergence is supposed to be about. Hooking devices together, either wirelessly or with cables, is another matter.

Technical know-how is just one of the stumbling blocks many face when trying to work out why one gadget will not talk to another. Homes that are smart are not just about being able to send pictures and video to different rooms either. It is also about the more mundane technologies in life, such as heating and lighting, and security technologies. Central locking for homes, for example, could be all controlled through fobs in the near future, for instance, or even through biometrics. Will Levy, founder of Touch of a Button, imagines one day soon being able to pick up the phone directory and easily find the equivalent of a registered “digital plumber”.

For a reasonable fee, they could come and install your fresh bit of kit, advise on security, and be on hand at the end of an e-mail or phone for aftercare services. Mr Levy’s vision of “digital plumbing” is squarely aimed at ordinary people who want their homes smart and more connected. “There is a huge amount of integration needed between different products,” he told the BBC News website. “There are hundreds of companies which are home automation installers. “The problem for me is that the starting price for that is a minimum of £27,000.” That is not the way to push convergence and smart home technologies out to the mass market, he says. “These are not distant future technologies, they are all out on the shelf today, and they are just a few hundred pounds. He thinks there needs to be more qualified people who can take the ordinary non-technical person step-by-step through the set-up and the integration of technologies with other systems around the home. “It could be very much like getting the plumber around,” says Mr Levy. “I could see in five or 10 years’ time having them in the Yellow Pages alongside plumber of electricians that you call out.”

According to research by US digital home consultants, The Diffusion Group (TDG), more than half of US households are interested in some sort of home control system, if the price tag is less than $200. TDG also found that global home networking is set to grow from 35 million households in 2004 to more than 162 million by 2010. With that, says TDG, the number of devices and gadgets that will be able to use that network will rise from 108 million in 2004 to just shy of one billion by 2010.

Instead of three devices able to talk on the network to others in every household in 2004 on average, there will be six.

Others predict that 23 million European households will be using wireless home networks to share media content by 2009. Despite these healthy-looking predictions, Intel research on the 21st Century living room revealed that 42% of Brits complained that technology was already crowding them out of their own houses. One in four living rooms are stacking up more than seven separate technology devices. The average Brit does not have an American style open-plan living space either. Rooms are smaller and ceilings are lower. But if there are going to be digital plumbers who can come around and fiddle with plugs and networks, there needs to be an industry standard qualification to protect against digital cowboys.

The ComTIA international standard for teaching such skills is being promoted in certain areas of the UK, such as Yorkshire.

Recent Comments